Recurring Patterns of Constraint Misalignment

Why do organisations, communities, and societies become unstable?

Political crises, economic decline, organisational dysfunction, social fragmentation, and governance failures often appear unique. However, beneath the surface they frequently exhibit recurring patterns.

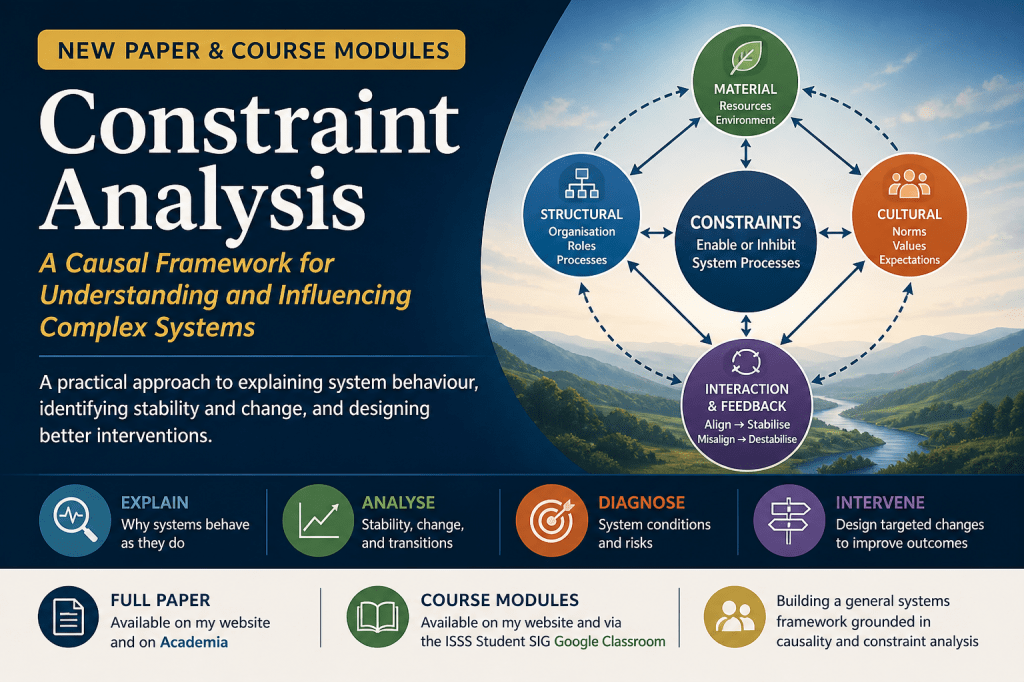

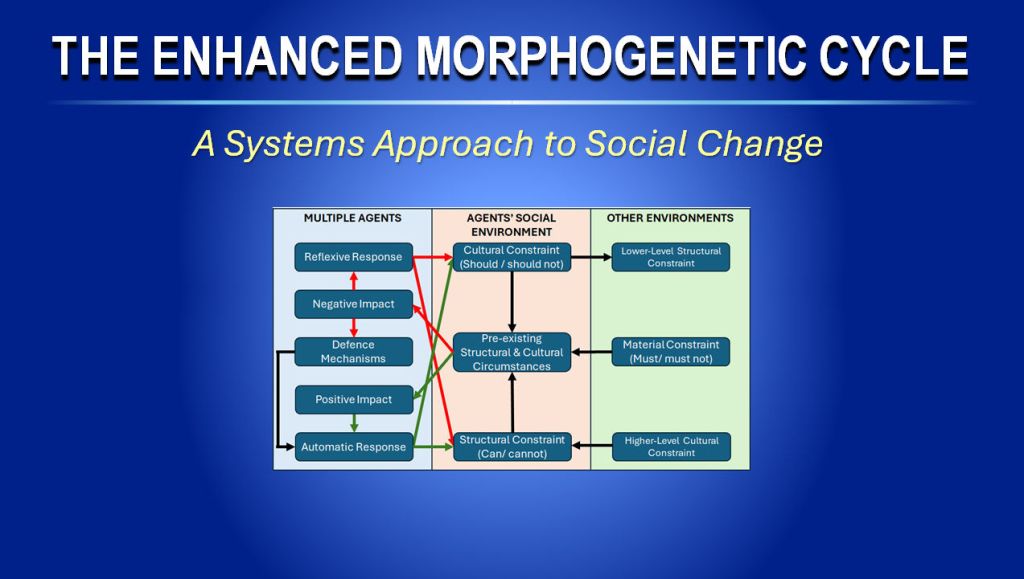

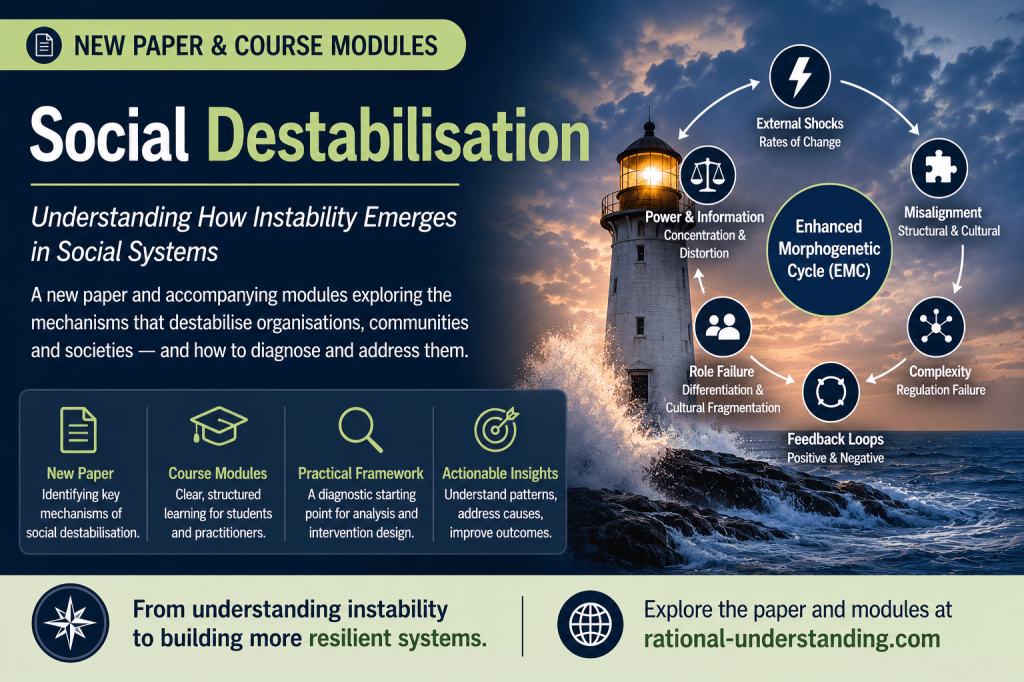

In this new paper, Social Destabilisation, I explore how instability can emerge from the misalignment of constraints within social systems. Drawing upon the Enhanced Morphogenetic Cycle (EMC) and Constraint Analysis, the paper identifies a number of recurring destabilising mechanisms, including:

• External shocks and differential rates of change

• Structural and cultural misalignment

• Complexity and constraint regulation failure

• Positive feedback and resource depletion

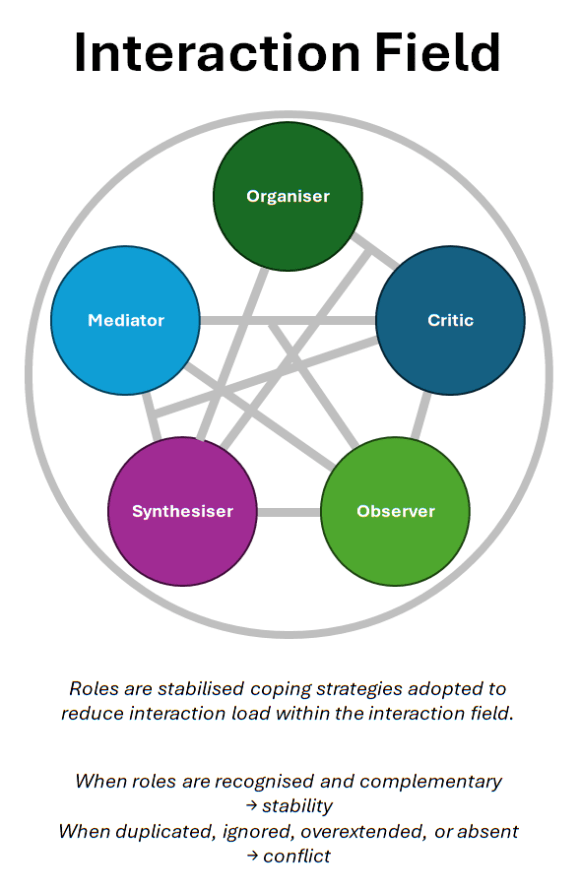

• Role differentiation failure and cultural fragmentation

• Power concentration and feedback distortion

Rather than treating crises as isolated events, the paper argues that many can be understood as recurring patterns of constraint misalignment.

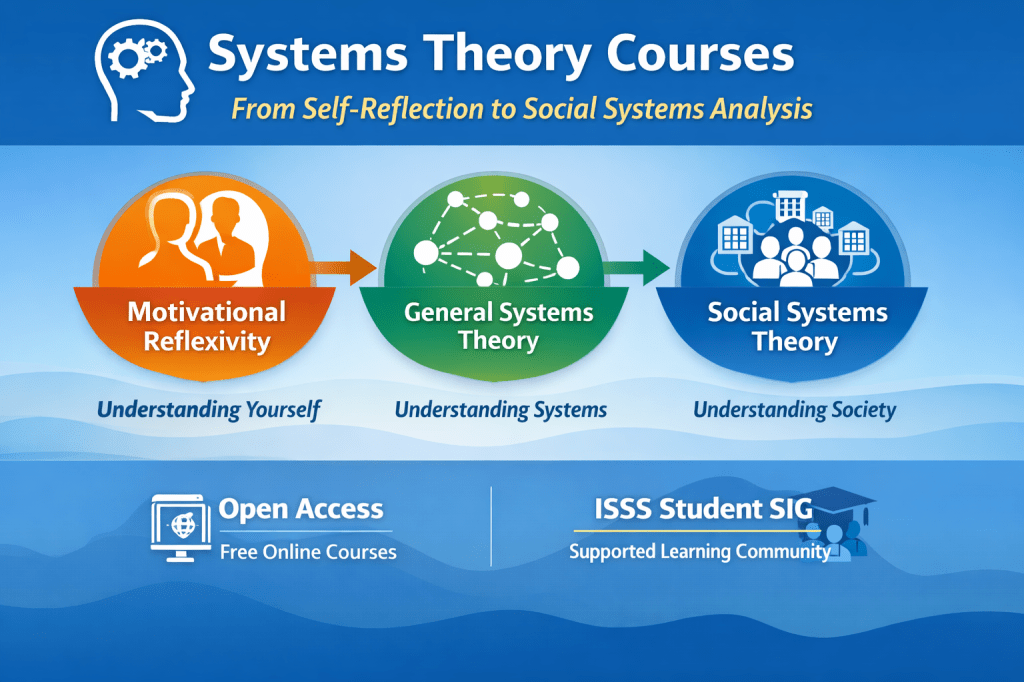

The paper is accompanied by a series of course modules designed to make the concepts accessible to students, practitioners, and anyone interested in understanding how social systems change.

The final section introduces a practical diagnostic framework that can be used as a starting point for more detailed constraint analysis and intervention design.

Understanding instability is often the first step towards improving stability, adaptability, and long-term viability.

As always, this paper and the course modules are open access and can be read here:

Paper: https://www.academia.edu/168127594/Recurring_Patterns_of_Constraint_Misalignment

https://rational-understanding.com/sst/

Course Modules: